Key Takeaways

- Monolithic applications offer simpler development and deployment workflows but can face scalability challenges as applications grow

- Microservices provide better component isolation and independent scaling but introduce additional complexity in deployment and management

- Container orchestration tools like Kubernetes can effectively support both architectures, but configuration requirements differ significantly

- Your team size, development expertise, and application complexity should drive your choice between monolithic and microservices approaches

- Hybrid approaches like modular monoliths offer a practical middle ground for organizations transitioning their container strategy

Container Architecture: Microservices vs Monolithic at a Glance

Choosing between microservices and monolithic architectures for your containerized applications isn’t just a technical decision—it’s a strategic one that impacts development velocity, operational overhead, and business agility. While microservices have dominated tech discussions in recent years, both approaches have distinct advantages within container environments. The key is understanding which architecture aligns with your specific requirements, team capabilities, and long-term objectives.

Container technology has completely changed the way we deploy applications, regardless of the architecture style. Docker and other container solutions provide a consistent runtime environment that is just as effective for monolithic applications as it is for distributed microservices. However, the way you structure your application within those containers will fundamentally change the way you develop, deploy, and scale your software.

What Makes Monolithic Applications Work in Containers

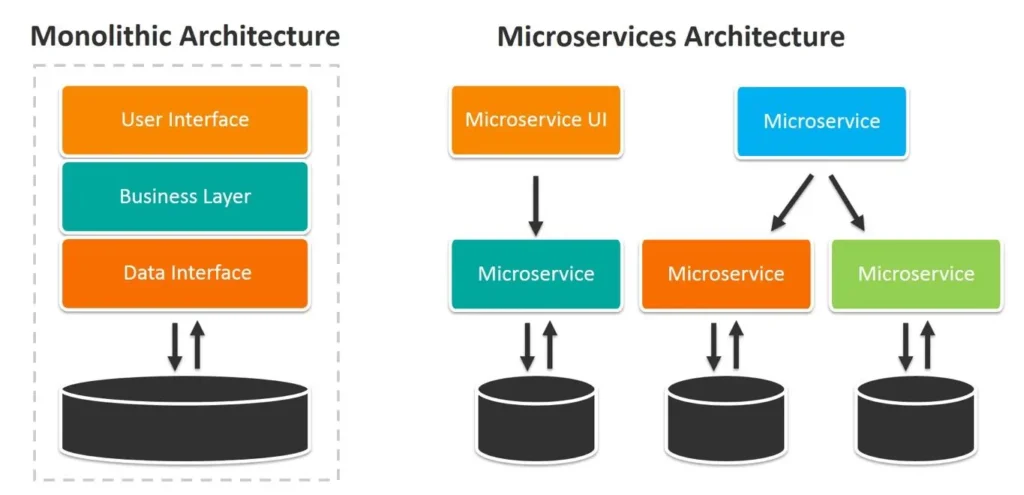

A monolithic application brings all components—from the user interface to business logic and data access layers—into a single deployable unit. When put into a container, this whole application runs as a single process within the container, sharing resources and maintaining a close connection between components. This conventional approach continues to drive many successful applications even though the industry is shifting towards microservices.

Easier Development and Deployment Process

Monolithic applications that are containerized have a much easier development process. Developers deal with a unified codebase where function calls between components occur directly in memory rather than over network interfaces. This makes debugging much easier because the entire application state is in one place. When you’re ready to deploy, you’re managing one container image instead of updating multiple services, making your CI/CD pipelines and deployment strategies much less complicated.

Reduced Operational Complexity

The operational ease of monolithic containers is a significant advantage. By only having one container to keep an eye on, scale, and maintain, you greatly reduce your observability needs. You’ll have fewer monitoring endpoints, less complicated logging aggregation, and simpler alerting rules. Resource allocation is also simplified—you’re dealing with the scaling needs of the whole application rather than juggling resources across many microservices with different demand patterns.

The Surprising Strengths of Monolithic Applications

Monolithic applications have a reputation for being outdated and inefficient, but they can actually be the superior choice in certain situations. For example, applications that involve complex transactions across multiple domains often perform better as monoliths. Because all components of a monolithic application are contained within the same process, there is no need for internal communication over a network. This can greatly decrease latency for operations that require a lot of data. Smaller teams may also find that they are more productive when working with monoliths. This is because monoliths eliminate the need for microservice governance, complicated deployment coordination, and debugging of distributed systems, all of which can take up a lot of a development team’s time.

Additionally, applications that don’t need to scale much or that have regular load patterns might not get enough benefit from the granular scaling of microservices to make up for the added complexity. The simplicity of deploying and managing a single container can often mean new features get to market faster, especially with smaller applications or proof-of-concept projects.

The Advantages of Microservices in Container Settings

Microservices architecture splits applications into specific, loosely linked services that communicate via clearly defined APIs. Each service, which is responsible for a particular business function, runs in its own container and can be developed, deployed, and scaled independently. This method is ideally suited to container orchestration platforms such as Kubernetes, which are excellent at managing distributed workloads.

Scaling Components Separately

Microservices in containers offer the distinct advantage of granular scalability, which is likely the most compelling benefit of this approach. Instead of having to scale the entire application when only one function is experiencing high demand, you can direct additional resources to exactly where they are needed. For example, an e-commerce platform could scale its product catalog service during periods with heavy browsing and scale the checkout service when there are spikes in purchases.

Quicker Development Cycles

Microservices significantly speed up development cycles by allowing multiple teams to work in parallel. Each service has clear boundaries and interfaces, which allows teams to work separately without interfering with each other. This isolation allows a team to update their service without having to coordinate with all the other teams, removing the bottlenecks that are common in monolithic development. Containerization enhances this benefit by providing consistent environments from development to production, reducing the “it works on my machine” problems.

Improved Damage Control and Recovery

In a microservices structure, when a glitch happens, the damage is usually confined to a single service and doesn’t take down the whole application. This damage control means that users may experience a decrease in functionality in one area while the rest of the application continues to work as usual. Containers boost this resilience by simplifying the implementation of health checks, automatic restarts, and quick rollbacks when problems occur. With the right circuit breaking and fallback methods, your application can elegantly manage service failures instead of spiralling into system-wide blackouts.

Service-Specific Technology Freedom

Microservices free development teams from having to make blanket technology decisions. Each service can use the programming language, framework, or database that best meets its unique needs instead of having to settle for something that will work with the whole application. This freedom is especially beneficial in containerized environments where the container provides a consistent deployment environment while still allowing for variation in how things are implemented. Teams can try out new technologies one service at a time instead of having to rewrite the whole application, making innovation less risky.

Performance Impacts: What the Benchmarks Show

Comparing the performance of monolithic and microservices architectures, it’s clear that there are pros and cons to each approach rather than one being definitively better than the other. Benchmarks consistently demonstrate that the architectural approach you choose can significantly impact resource utilization and response times. However, the extent of these impacts can vary depending on the specific characteristics of the application and how it is deployed. Understanding these performance differences is key to making the right decisions for your container strategy based on your specific performance needs. For more insights on optimizing your deployment, consider the benefits of managed Kubernetes vs. self-hosted solutions.

Boot-up Speed and Resource Use

Monolithic containers often boot up faster than full microservices deployments, which can be a plus for situations where you need to scale or recover quickly. With a monolithic container, you’re booting up one instance of an application. With microservices, you might need to boot up dozens of containers and set up network connections between them. On the flip side, microservices often make better overall use of resources by letting you allocate computing resources to components based on their specific needs rather than having to provision for peak needs across the entire application.

Thinking About Network Latency

One of the biggest performance challenges with microservices is the network communication overhead they introduce. Each call from one service to another adds latency that simply doesn’t exist in monolithic applications where components communicate through in-memory function calls. This overhead can become a problem in applications where latency is a concern, especially when a single user action requires requests to go through multiple services. You can lessen these effects with container networking optimizations and service mesh technologies, but they add another layer of complexity to your infrastructure.

Database Interaction Styles and Speed

There is a significant difference in the way databases are accessed in different architectures, and this can have a big impact on performance. Monolithic applications usually work with one database and can use transactions that include multiple operations. This ensures that the data is consistent, but it can also cause bottlenecks. Microservices, on the other hand, often use a database for each service. This improves isolation and scalability, but it also brings up issues with distributed transactions and eventual consistency.

According to the benchmark data, the way the database is accessed is usually the deciding factor in the performance of the entire application. Microservices may need to use more complex patterns like CQRS (Command Query Responsibility Segregation) or event sourcing to maintain performance while ensuring data consistency across service boundaries. The containerization layer adds minimal overhead to database operations compared to these architectural considerations.

5 Key Considerations for Your Container Strategy

Choosing between monolithic and microservices for your containerized applications isn’t just about technical considerations. Your decision needs to be based on your organization’s unique context, capabilities, and objectives, not just what’s trending in the industry. These five considerations give you a structure for making this important architectural decision.

1. The Complexity and Size of the Application

One of the main factors that can help you decide which architecture is best for you is the complexity of the application. Simple applications with limited domain complexity will not benefit much from microservices and may even suffer from their overhead. However, as the complexity of the application increases, the modularization benefits of microservices become increasingly valuable, especially when different components have different scaling, reliability, or technology needs.

Take into account the current size of your application and where you see it going in the future. Many successful systems start out as monoliths and move towards microservices as they grow, letting teams handle complexity a bit at a time instead of all at once. Containerization provides a consistent way to deploy that can support either path or a slow shift from one to the other.

2. Team Organization and Skills

The structure of your team and its technical skills will play a big role in determining the best container architecture for your organization. Microservices require a deep understanding of distributed systems, API design, containerization, and monitoring. If your team doesn’t have these skills, you may need to train them or hire experts. Smaller teams often find that they can be more productive with monolithic applications, because they can focus their skills instead of having to spread them across multiple services.

Microservices are a good fit for companies that have autonomous product teams responsible for distinct business capabilities. This team structure fits well with service boundaries, allowing each team to own their services from start to finish. On the other hand, companies with centralized development teams may find the coordination requirements of microservices to be too much, making a monolithic approach more appropriate, even with containerization.

3. Scalability Needs

Take a look at the scalability requirements of your application from various angles: load fluctuations, geographic spread, and the rate of feature expansion. Applications that experience significant load variations impacting specific components can greatly benefit from the fine-grained scaling provided by microservices. For instance, an application may need to scale up its authentication service during peak login times while leaving other components at their base capacity.

Think about how containers play into your scalability strategy. Container orchestration platforms like Kubernetes can handle both architectures, but they are particularly good at managing the dynamic scaling of microservices. Monolithic applications in containers are still more efficient at scaling than those not in containers, but they can’t achieve the resource optimization that well-designed microservices can.

4. Frequency of Deployment

Companies that focus on continuous delivery and frequent updates may find microservices more in line with their objectives. The capability to independently deploy individual services lessens the risk of deployment and allows for more frequent updates to specific components of the application. Teams can release important fixes or new features without having to orchestrate a full application release, greatly reducing the time it takes for changes to reach the market.

But, this advantage is accompanied by a rise in the complexity of the deployment pipeline. Each microservice needs its own build, test, and deployment pipeline, which increases the infrastructure and governance overhead. Although container-based deployment can standardize this process, it does not remove the fundamental increase in complexity.

5. Future-proofing with a Maintenance Plan

Think about how long your application will be in use and how your maintenance plan may change over time. Microservices are often a good choice for applications that will be used for a long time because they allow for gradual evolution. This means you can update one service at a time instead of having to rewrite the entire application. This gradual approach is especially useful for systems that need to keep up with changing business needs over a long period of time.

Both architectures reap significant maintenance benefits from containerization, providing consistent environments and deployment patterns that minimize “it works in development” problems. However, maintaining a microservices ecosystem necessitates continual investment in cross-cutting issues such as monitoring, logging, and security, which are greater than those of monolithic applications.

Mixing It Up: The Best of Both Microservices and Monolithic Applications

Choosing between monolithic and microservices architectures doesn’t have to be an either-or decision. Many businesses effectively use hybrid approaches that use containerization to combine elements of both models. These hybrid strategies let teams use different architectural patterns for different parts of their applications based on specific needs instead of sticking with a one-size-fits-all solution.

Using Modular Monoliths as an Intermediate Step

Modular monoliths serve as a practical compromise. They are a single application that can be deployed with clear internal boundaries between components. This method ensures clean interfaces and the separation of concerns while avoiding the distributed systems challenges that complete microservices present. Containers improve this strategy by providing isolation at the deployment level while keeping the management of a single application simple. For insights into optimizing deployment strategies, check out this case study on CI/CD automation.

It’s common for many businesses to use modular monoliths as a stepping stone towards their final architecture. They clearly establish the boundaries that could later become service boundaries if necessary. This method allows teams to concentrate on creating a good domain model and clean interfaces before dealing with the added complexity of distributed systems. When these applications are containerized, it becomes easier for them to evolve into microservices as certain modules become too big for the monolithic architecture.

Using the Strangler Pattern for Gradual Migration

The Strangler Pattern provides a way to gradually and safely transition from monolithic applications to microservices. Instead of taking a high-risk approach and trying to rewrite everything at once, teams can identify the components that would benefit the most from being extracted and slowly move them to separate services. Using containers makes this pattern much easier because it provides a consistent environment for both the monolith and the new microservices.

Adopting a step-by-step strategy enables companies to shape their microservices approach based on practical experience instead of hypothetical planning. Teams can prioritize the extraction of high-value or challenging components, showing the business value before deciding to undergo a full transformation. A lot of companies discover that this slow evolution leads to more effectively designed services than trying to establish all service boundaries from the get-go.

How Container Orchestration Differs Between Architectures

Whether you’re deploying a monolithic application or microservices, you’ll likely be using a container orchestration platform like Kubernetes. However, the way you configure your platform will vary greatly depending on which architectural approach you’re using. By understanding these differences, you can make sure you’re getting the most out of your container orchestration strategy, no matter which architecture you’ve chosen.

Finding and Communicating with Services

There is a big difference in how service discovery is handled in each type of architecture. Monolithic applications usually don’t need much service discovery because most of the components talk to each other internally. When they do need to discover services, it’s mostly for routing traffic from outside to the right container instances. Because of this, containerized monoliths can get by with simpler network setups that have less parts to manage.

Microservices require powerful service discovery mechanisms to find and interact with other services. Kubernetes provides built-in service discovery through its Service resources, but many microservices architectures use additional tools like service meshes to manage more complex routing, security, and resilience patterns. These additional layers offer useful features but make your container orchestration configuration more complicated.

Monolithic applications use mainly in-process communication, while microservices use network-based protocols. This means that container networking optimizations are far more important for microservices architectures, where the amount of communication between services can be quite large. When designing communication patterns for microservices, teams need to think carefully about latency budgets and network reliability.

How Resources Are Allocated

The way you allocate resources for containers can vary widely depending on the architectural approach you’re using. If you’re dealing with a monolithic application, you’ll usually allocate a large amount of resources to a single container. On the other hand, with microservices, you’ll distribute smaller resource allocations across many containers. This basic difference will shape how you set up resource requests, limits, and horizontal scaling policies on your container orchestration platform.

Lessons from Real-World Experiences

Real-world experiences can provide valuable insights to help inform your architectural decisions. Both monolithic and microservices approaches have had their fair share of success and failures. These case studies show that the “best” architecture is more about aligning with the context of the organization than it is about technical superiority.

Netflix’s Transition to Microservices

Netflix is often held up as the poster child for successful microservices implementation. They began as a monolithic DVD rental business and have since evolved into a microservices-based streaming behemoth. Their journey began with a single monolithic application that ran into significant scalability issues as their streaming business exploded. The shift to microservices allowed them to scale individual components independently and speed up feature development through parallel team workflows.

“Inside Netflix: A Deep Dive Into Its …” from www.linkedin.com and used with no modifications.

Netflix’s successful transition was largely due to their commitment to supporting infrastructure. They developed a wide range of tools for service discovery, fault tolerance, and monitoring, which later became the Netflix OSS suite and influenced microservices practices across the industry. This investment in infrastructure is a significant commitment that many organizations underestimate when they consider making a similar transition.

Netflix’s development of a containerization strategy developed in tandem with their architecture, ultimately adopting containerization for consistency while developing advanced orchestration capabilities. Their journey illustrates how containers can enhance microservices, but also underscores the significant operational complexity of managing thousands of services. Most organizations will not operate at the scale of Netflix, making their specific approach potentially unsuitable for direct replication.

Segment’s Return to a Monolith

Segment, a customer data platform, serves as a remarkable exception to the trend, having transitioned from microservices to a more monolithic structure for their data processing pipeline. They encountered operational issues with their microservices implementation, such as intricate debugging, deployment coordination problems, and resource inefficiencies. As a result, they combined key components into what they named a “centrolith” architecture.

Segment’s experience underscores the fact that microservices are not always the best option, especially for data-intensive applications that require complex transactions. Their containerization strategy did not change as their architecture did, showing that containers are beneficial no matter what the application architecture may be. Their journey highlights the importance of choosing an architecture that fits the specific characteristics of an application, rather than simply following industry trends.

Choosing the Best Container Strategy for Your Needs

When deciding between monolithic and microservices architectures for your containerized applications, it’s important to weigh the technical aspects against the realities of your organization. There isn’t a one-size-fits-all solution—only the architecture that best fits your unique situation, needs, and limitations. The most successful organizations make this decision carefully instead of just going along with whatever the industry trend is.

Begin by realistically evaluating your team’s skills, the complexity of your application, and your operational preparedness. Container technology offers benefits for both architectural styles, so first concentrate on which application architecture best meets your business goals. Keep in mind that architecture can change over time—many successful systems start as monoliths and slowly incorporate microservices features as they develop.

Before fully committing, it may be worthwhile to implement a trial project to verify your architectural approach. Containerization makes it fairly easy to experiment with different architectures in a controlled environment. This allows you to collect real-world data on performance, development speed, and operational overhead. This evidence-based approach tends to lead to more successful results than theoretical debates about architectural purity.

Architecture Decision Framework

• Start with business goals, not technology preferences

• Assess team capabilities honestly

• Consider application complexity and growth trajectory

• Evaluate operational readiness for distributed systems

• Begin with the simplest architecture that meets your needs

• Plan for evolution rather than perfection

Frequently Asked Questions

As you evaluate container architectures for your organization, several common questions arise regarding the practical implications of monolithic versus microservices approaches. These answers provide additional context to support your decision-making process and implementation planning.

The following questions are the most frequently asked by development teams when they are planning their container strategy. These questions are based on the experiences of various organizations of different sizes and industries.

What additional overhead do microservices bring to a container environment?

Generally, microservices can add between 30-50% of overhead in terms of infrastructure resources and operational complexity when compared to monolithic applications with the same functionality. This overhead comes from several sources: additional network communication, redundant instance capacity for reliability, service discovery infrastructure, and more comprehensive monitoring requirements. Organizations should plan for this increased resource consumption and operational complexity when planning their transition to microservices.

Is Kubernetes capable of effectively running a monolithic application?

Indeed, Kubernetes is capable of effectively running monolithic applications. Even for deployments involving a single container, it offers benefits such as automated restarts, scaling, and rolling updates. While you won’t be able to take full advantage of Kubernetes’ features designed for distributed applications, containerized monoliths still have a significant operational advantage over traditional deployment methods.

Set up your monolithic containers with the right resource requests and limits, and think about putting in place liveness and readiness probes to activate Kubernetes’ self-healing abilities. Many companies successfully operate large monolithic applications in Kubernetes and take advantage of its strong container orchestration features.

|

Kubernetes Feature |

Benefit for Monoliths |

Benefit for Microservices |

|---|---|---|

|

Horizontal Pod Autoscaling |

Scale based on overall application load |

Independent scaling per service |

|

Rolling Updates |

Zero-downtime deployments |

Independent service updates |

|

Health Checks |

Automatic restart of failed instances |

Granular health monitoring per service |

|

Service Discovery |

Basic load balancing |

Complex service mesh capabilities |

For monolithic applications, you may find Kubernetes’ resource overhead somewhat high relative to the simplicity of your deployment. Consider whether a simpler container orchestration solution might better match your needs if you’re committed to a monolithic architecture long-term.

How many people do you need on a team to manage a microservices architecture?

A microservices team that can get the job done usually has at least 5-7 developers and dedicated DevOps support. This team size ensures that all the necessary skills are covered: application development, API design, containerization, orchestration, monitoring, and security. Teams that are smaller than this often find it difficult to keep up with the infrastructure needs of microservices while also developing the functionality of the application. For those considering different DevOps solutions, exploring managed Kubernetes vs self-hosted solutions might be beneficial.

The composition of a team is just as important as its size. For a microservices team to be successful, it needs a good balance of development and operational expertise. Team members need to be comfortable working outside of their traditional roles. If this balance isn’t achieved, microservices architectures often have operational blind spots or development inefficiencies.

Companies with a smaller team should start with a monolithic or modular monolithic approach, even if they are using containers. As the team gets bigger and more experienced, they can slowly start to use more microservices and build the operational capabilities they need. This step-by-step approach lowers the risk and lets the team learn and adjust as they go.

How are deployment pipelines different in monolithic versus microservice containers?

Monolithic container pipelines usually have a single build process that packs the whole application into one container image. This simplicity allows for easy CI/CD implementation with thorough integration testing before deployment. Pipeline failures impact the whole application, but troubleshooting is easier because there’s only one build and deployment process to look at.

Microservices need many parallel pipelines, one for each service, with a well-orchestrated coordination of interdependencies and versioning. These pipelines stress quick feedback for individual services while also dealing with the intricacy of partial deployments. Organizations usually require more advanced CI/CD tools to effectively manage microservices deployments, including features for canary releases, feature flags, and automated rollbacks.

Can a monolithic container be gradually transformed into microservices?

Indeed, for most businesses, the most successful strategy is to gradually transition from a monolithic container to microservices. The strangler pattern offers a tested strategy: identify bounded contexts within your monolith, extract them one by one into separate services, and slowly reroute traffic from the monolith to these new services. Containerization makes this transition much easier by providing consistent deployment patterns across both architectural styles.

Start by defining distinct module boundaries within your monolithic application if they aren’t already there. These boundaries will be the foundation for future service extraction. Prioritize the extraction of components that have clear interfaces, few dependencies on other parts of the monolith, and ideally provide business value through enhanced scalability or development speed. Consider reading about the dangers of manual configuration to understand how it can impact your application development process.

Microservices architecture is gaining popularity due to its flexibility and scalability. Unlike traditional monolithic applications, microservices allow developers to deploy and manage services independently. This approach is particularly beneficial for container strategies, as it enables more efficient use of resources and easier scaling. Monolithic architectures can still have their place for certain use cases, the key to making the right choice is understanding your application complexity, team capabilities and business goals.